MapReduce involves a two-step batch process: Underneath this computation model is a distributed file system called Hadoop Distributed Filesystem (HDFS). Computation tasks are broken into units that can be distributed around a cluster of commodity servers, thereby providing cost-effective, horizontal scalability. It imposes a divide-and-conquer programming model, called MapReduce. The Hadoop ecosystem emerged as a cost-effective way of working with such large data sets. You may be asking, if the old-school RDBMS approach is not likely to be appropriate for very large unstructured data, why not just use Hadoop with its classical MapReduce approach? It seems to be a mature technology as all 100 fortune companies like Google, Facebook, Twitter and LinkedIn use it to harness their Big Data. Beware though that, MPP gets used on expensive, specialized hardware tuned for CPU, storage and network performance, whereas a cheaper solution such as Hadoop runs on a cluster of commodity servers. You can also buy a Massively-parallel processor ( MPP) SQL appliance that could do the job for you. If the data access pattern is dominated by seeks, it will take longer to read or write large portions of the dataset than it would by streaming through it sequentially at the speed of the transfer rate. The first question that arises is, why can’t you use Relational Database Systems (RDBMS) with many disks to do large-scale analysis? Why would you need a completely new data framework? The answer to these questions comes from the way that disk drives are evolving: seek time is improving more slowly than transfer rate.

If you are struggling in a project that has to deal with a high volume of unstructured data, and you need to get a measure of business insight from it, you probably need to use a completely different computing framework one that allows you to process the data with ease without waiting hours for the results. You’re familiar with its syntax and you know its caveats or limitations so what is a reason to learn yet another framework? There are many data frameworks that allow you to process data and you probably have your favorite tool already in your tool belt. Then we will jump into Databricks – a simple, integrated development environment, where you can even use your familiar SQL skills to tackle the analysis of vast quantities semi-structured data.

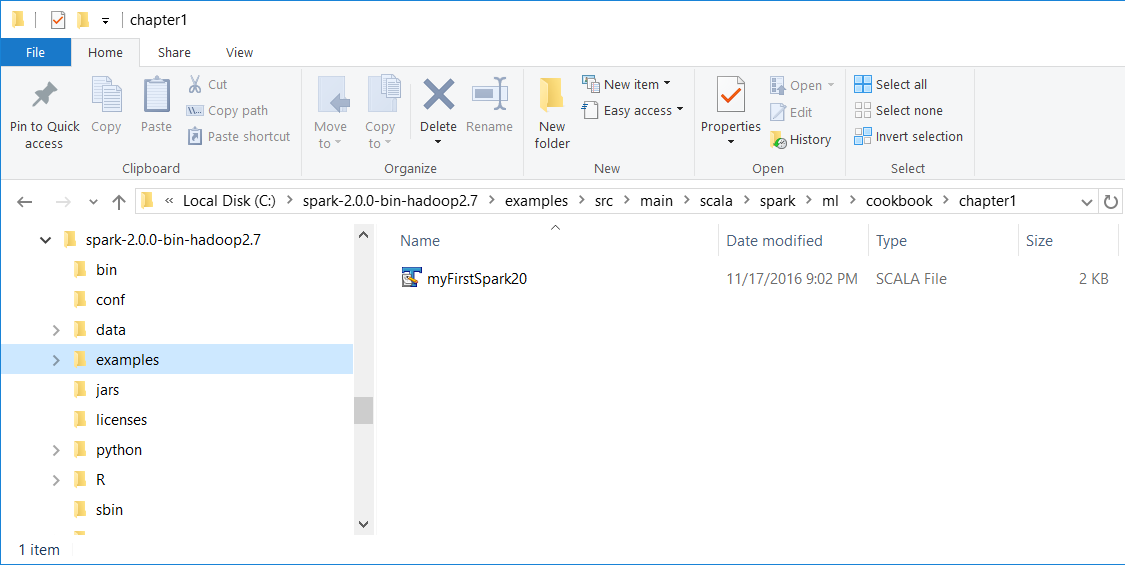

HOW TO INSTALL APACHE SPARK 2.1 ON MAC HOW TO

First I explain the advantages of it, next we’ll see some basic examples how to use its shell to write your first application.

HOW TO INSTALL APACHE SPARK 2.1 ON MAC PROFESSIONAL

Hence, in this article I will start by explaining why it might be a good idea for a developer or data professional to get familiar with Apache Spark – a fast and general engine for large-scale data processing. However there is a quite big chance that you will come across the project where you will have to process the data that can’t be stored on your personal flash drive. Knowing that Big Data is really big and more common every day, it does not mean that starting from tomorrow you will be analyzing petabytes of data from The Large Hadron Collider (I wish you). An accurate operational definition is that organizations have to use Big Data when their data processing needs get too big for traditional relational database systems (RDBMS). There is no single definition of Big Data, but there is currently a lot of hype surrounding it. How to Start Big Data with Apache Spark - Simple Talk Skip to content